The popularity of Docker is not without reason. It has vastly changed how developers approach application development.

It has become a standard in the IT industry for packaging, deploying, and running distributed applications with ease.

The key benefit of Docker is that it allows users to package an application with all of its dependencies into a standardized unit called a container.

Since Docker is a containerization platform, you must understand the history behind containerization.

The History Before Containerization

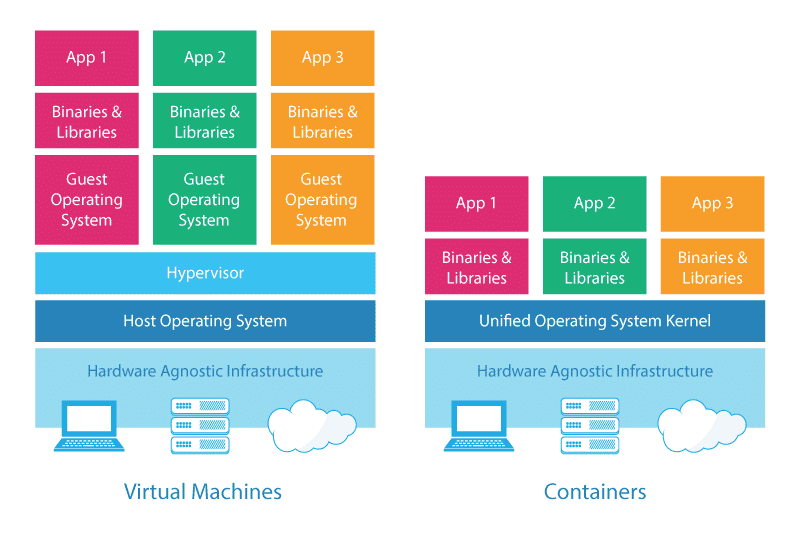

Before containerization came into the picture, the top way to isolate and organize applications and their dependencies was to place each application in its virtual machine.

These machines run multiple applications on the same physical hardware, and this process is nothing but virtualization.

But virtualization had a few drawbacks, such as the virtual machines being bulky in size. Moreover, running multiple virtual machines leads to unstable performance.

Boot up process would usually take a long time, and VMs would not solve the problems like portability, software updates, or continuous integration and continuous delivery.

These drawbacks led to the emergence of a new technique called containerization.

Containerization is a type of virtualization that brings virtualization to the operating system level.

While virtualization brings abstraction to the hardware, containerization brings abstraction to the operating system.

Containers vs. Virtual Machines

The terms “Containers” and “Virtual Machines” are often used interchangeably; however, this is often misunderstood.

But, both are just different methods to provide Operating System Virtualization.

Virtual Machines

Virtual machines generally include an entire operating system, packages, and, if required, a few applications.

A Hypervisor makes this possible, which provides hardware virtualization to the virtual machine.

This allows a single server to run many standalone operating systems as virtual guests. Generally speaking, a virtual machine is a system that acts exactly like a computer.

Containers

Containers are similar to virtual machines, except containers are not whole operating systems. Containers generally only include the necessary OS packages and applications.

They do not typically contain an entire operating system or hardware virtualization; these are “lightweight.”

A container is generally used to isolate a running process within a single host to ensure that the isolated processes cannot interact with other processes within that same system – containers sandbox processes from each other.

To put it simply, you can think of a Docker container as a lightweight equivalent of a virtual machine.

Docker enables creating and working with containers as efficiently as possible.

Reasons to Use Docker Containers

- Containers have no guest OS and use the host’s operating system. So, they share relevant libraries & resources as and when needed.

- App isolation: If you want to run multiple applications on one server, keeping the components of each application in separate containers will prevent problems with dependency management.

- Processing and execution of applications are very fast since applications specific binaries and libraries of containers run on the host kernel.

- Booting up a container takes only a fraction of a second.

- Containers are lightweight and faster than virtual machines.

What is a Docker Container?

Docker is a platform that packages an application and all its dependencies together in the form of containers.

It uses containers to make applications’ creation, deployment, and running easier. Docker binds the application and its dependencies inside a container.

Containers allow a developer to package up an application with all of the parts it needs, such as libraries and other dependencies, and ship it all out as one package.

Let’s say you need to build an application. To make that application available to the public, you need someplace to host it. In the past, you would need to build your computer.

Then, you would need to set up a dedicated web service called a “server,” a computer dedicated to hosting websites or web services.

However, your application may only have an approximated size of 300 Megabytes to start with.

So, why would you want a “virtual machine,” a virtualized environment resulting from virtualization, that has the size of 1GB+ when your application is much less than that?

The concept of “container” comes in to fix that. Docker does it in the following way. Instead of hosting each operating system per application, some common resources can be shared, and there is something called “docker engine,” which sits on top of an operating system.

Adopting Docker, or container, is that applications can be deployed or undeployed faster. Start and stop more quickly, change to another “image” faster, process and do many things faster.

But it would help if you got to know the essential elements and tools around the Docker ecosystem when getting started.

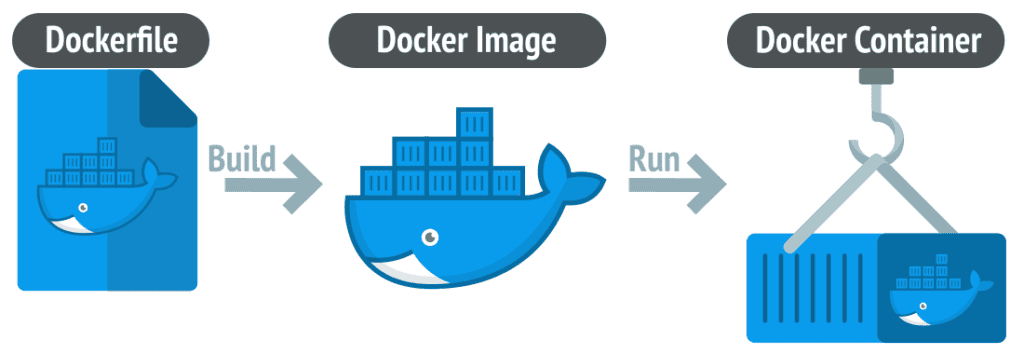

Dockerfile

A Dockerfile is a set of precise instructions stating how to create a new Docker image – setting defaults for containers being run based on it and a bit more.

It is a text document that contains all the commands that a user can call on the command line to assemble an image.

So, Docker can build images automatically by reading the instructions from a Dockerfile.

Docker Image

Docker Image can be compared to a read-only template used to create Docker containers. In other words, an image is a blueprint from which an arbitrary number of brand-new containers can be started.

No “currently running commands” are saved in an image. When you create a container, it’s like booting up a machine after it is powered down.

Docker Container

It is a running instance of a Docker Image as they hold the entire package needed to run the application.

Imagine you’d like to run a command isolated from everything else on the system. It should only access precisely the resources it is allowed to and does not know there is anything else on the machine.

The process running inside a container thinks it’s the only one who sees a barebones Linux distro the stuff described in the image.

A machine running the container should not have to care about what’s inside too much, and the dockerized app does not care if it’s on a Kubernetes cluster or a single server – it will be able to run anyway.

A container can run more than a single process at a time. So, for example, you could package many services into a single container and run them side by side.

When a Docker container is deleted, relaunching the image will start the container afresh without any of the changes made in the previously running container – those changes are lost.

Docker Volume

Images don’t change. You can create new ones, but that’s it. Containers, on the other hand, leave nothing behind by default. Therefore, any changes made to a container are lost as soon as it is removed.

To save (persist) data and share data between containers, Docker came up with the concept of volumes. Quite simply, volumes are directories (or files) outside the default file system and exist as standard directories and files on the host filesystem.

In other words, docker volumes enabled us to persist data and share it between containers.

Conclusion

We hope this article helped you understand the basic Docker fundamental of what Docker and Docker container is and how it’s revolutionized software development.

With the knowledge above, you should have a firm grasp of what Docker is about at the core.

You can visit the project’s website or refer to the official documentation for more information about Docker.